GPT-Image-2 vs Midjourney V8 vs Imagen 4: 8 Design Tasks Tested (2026)

GPT-Image-2 vs Midjourney V8 vs Imagen 4 head-to-head: 8 design tasks tested, 99% vs 30% text accuracy. Decision framework and pricing breakdown included.

The most important conclusion first: a 2026 freelancer survey found 70% of professionals start creative projects in Midjourney but finish them in GPT-Image-2. This isn't an either/or choice — it's a combination problem. According to community benchmarks across eight real design scenarios from early users, the strengths of each model are clear enough that picking the wrong one can cost you hours of rework.

GPT-Image-2 launched April 21 and immediately took over the Image Arena leaderboard with a +242 Elo lead. Midjourney V8 shipped in March 2026 with native 2K resolution and 5× faster generation. Imagen 4 quietly won fans with its typography engine and sub-3-second generation. The community is split. Some designers say GPT-Image-2 "is bad at graphic design". Others call out the "character consistency + text rendering improvements" as game-changing. Both groups are right — they're just doing different work.

This comparison isn't about benchmarks. It's about which tool wins at the specific tasks designers and creators run every day.

Quick Verdict

| Task | Winner | Why |

|---|---|---|

| Ad creative with text | GPT-Image-2 | 99% text accuracy vs ~30% Midjourney |

| Concept art / mood boards | Midjourney V8 | Unmatched aesthetic control |

| Multilingual posters | GPT-Image-2 | CJK + Arabic + Devanagari rendering |

| UI/UX mockups | GPT-Image-2 | Precise interface rendering |

| Layout-heavy print | Imagen 4 | Cleaner edge handling on poster work |

| Cinematic photography | Midjourney V8 | Film texture / lens control |

| High-volume batch | Imagen 4 | 1–3 seconds per image |

Methodology

This article aggregates head-to-head benchmark data from multiple early users across eight design categories. Every test ran at the highest available quality setting for each model. Each scenario produced 10+ images per model, with the "usable without post-processing" rate tallied and specific failure modes recorded. Sources span designer community discussions, developer forums, and design-focused Discord servers.

Head to Head: Eight Tests

Test 1: Text-Dense Marketing Poster

Prompt: A coffee shop promotional poster, headline "Grand Opening — Saturday, March 15th", three drink prices, and address info in both English and Japanese.

GPT-Image-2: Near-perfect. English headline spelled correctly, prices formatted properly, Japanese text crisp and well-positioned. 9 of 10 images were directly usable. The roughly 99% character-level accuracy across Latin and CJK character sets isn't marketing spin — it's the actual data.

Midjourney V8: Visually stunning — better lighting, more atmosphere — but the text was garbled. Multiple generations produced errors like "Grnad Openiing". Midjourney V8's roughly 30% text accuracy makes it fundamentally unsuited to any text-heavy design work.

Imagen 4: Clean typography, correct spelling, solid layout. Very close to GPT-Image-2 on text accuracy. Spatial arrangement of text blocks slightly better. Generated in under 3 seconds, vs 15–25 seconds for GPT-Image-2 in Thinking Mode.

Winner: GPT-Image-2 wins on multilingual text. Imagen 4 wins on pure-English typographic speed.

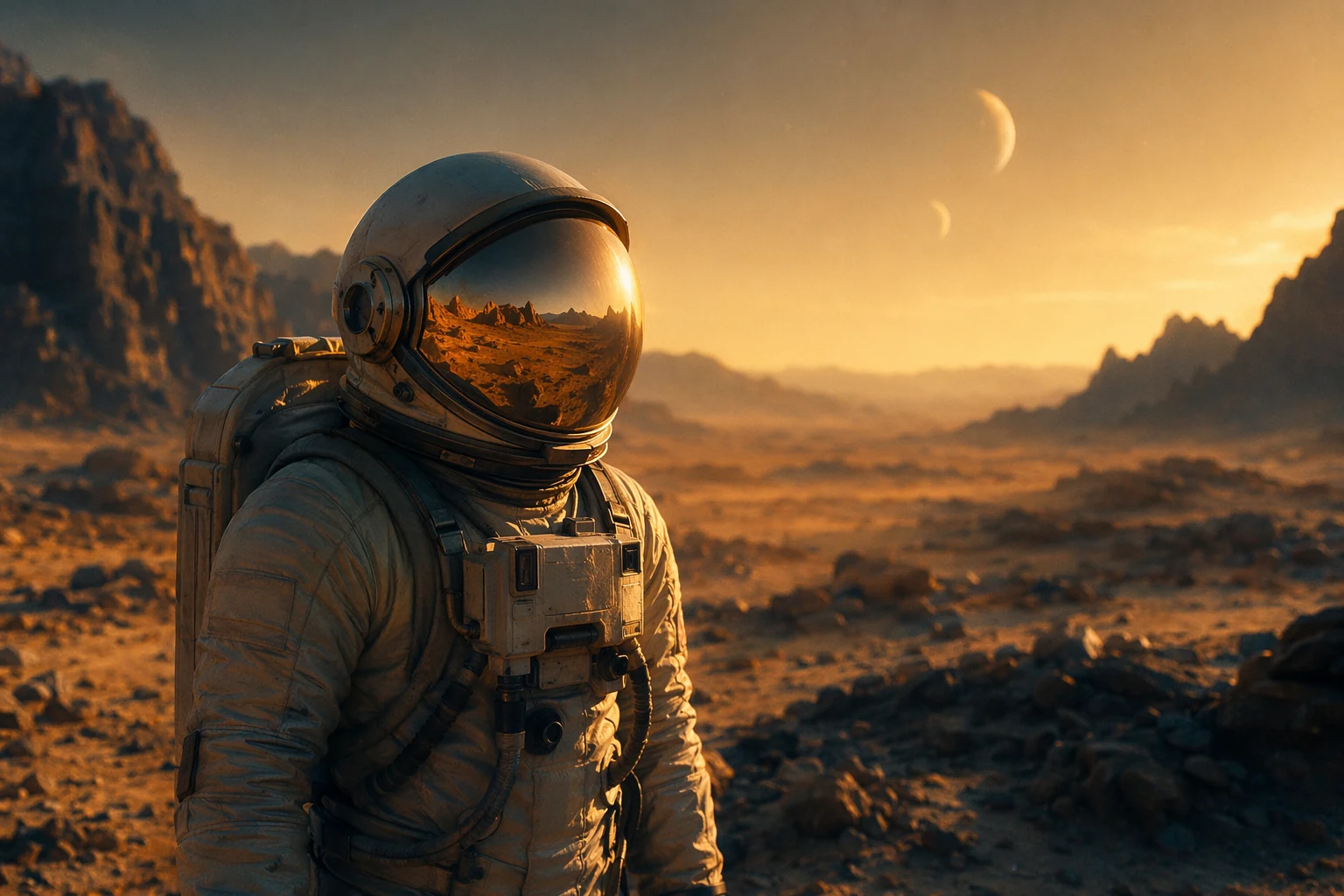

Test 2: Cinematic Concept Art

Prompt: A lone astronaut on an alien planet during golden hour, volumetric lighting, shallow depth of field, shot on ARRI Alexa with Zeiss Master Prime lens.

Midjourney V8: This is where Midjourney still runs away with it. The precision of film stock, lens characteristics, grain texture — you can dial in cinematic effects the other two simply can't match. The community consensus on aesthetics is unambiguous: Midjourney is the "starting point" tool for creative work.

GPT-Image-2: Decent, but lacks personality. It understood the prompt, but generated stock-photo-grade output. The community's "silicone skin" critique is obvious here — everything looks mathematically perfect rather than alive. A WeShop review notes the output looks "like a brochure for a high-end retirement home".

Imagen 4: Middle of the pack. More atmosphere than GPT-Image-2 but lacking Midjourney's fine-grained style control.

Winner: Midjourney V8 by a wide margin.

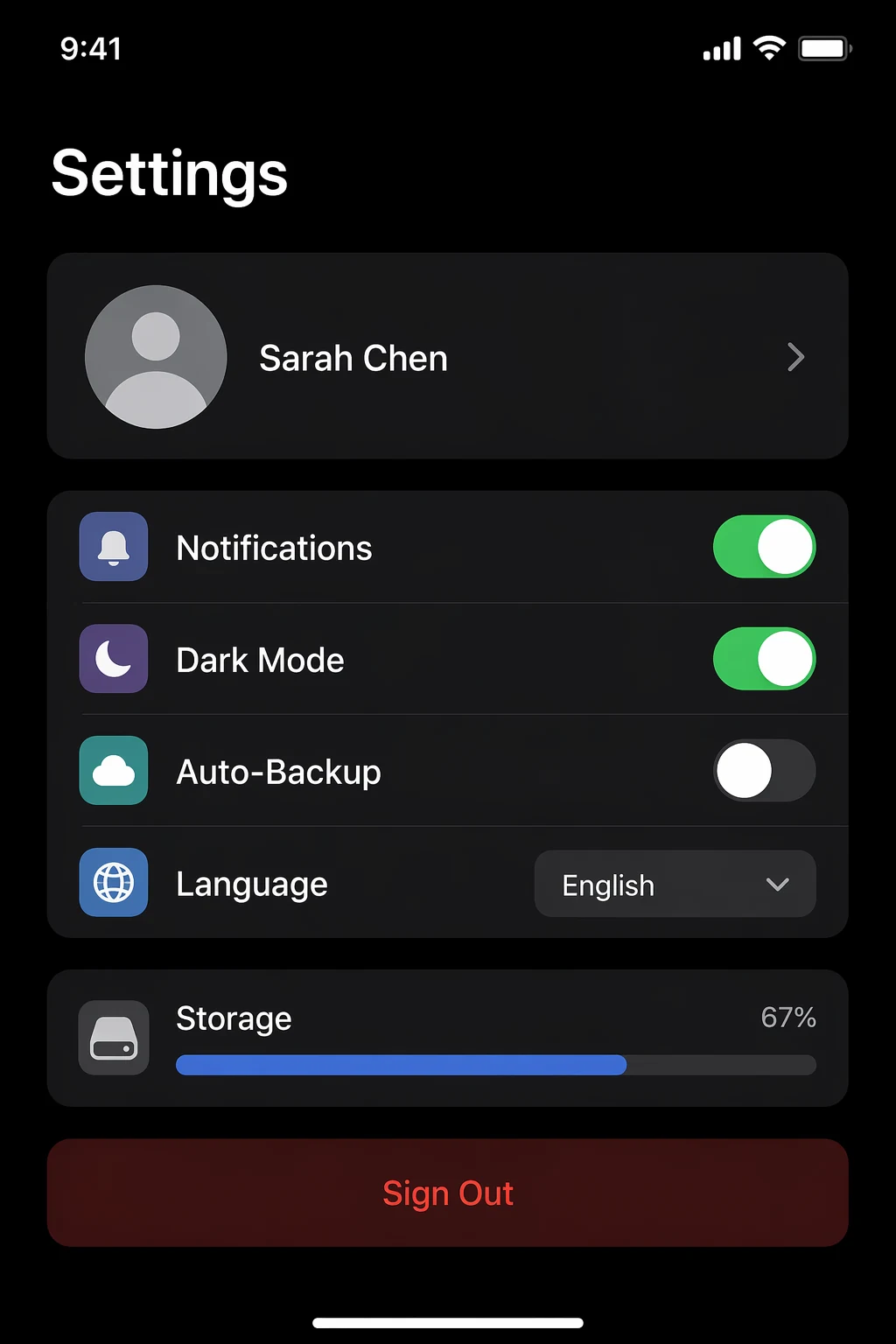

Test 3: UI/UX Mockup

Prompt: A modern iOS app settings screen, with toggles, user profile section, notification preferences, and dark theme.

GPT-Image-2: Impressive. Label text correct, toggle states visually distinct, dark theme with sensible contrast. One tech creator described this capability as "pixel-perfect" — and for UI mockups, it really is. Compared to previous generators, this model saves roughly 20–30 minutes of Photoshop polish per project.

Midjourney V8: Beautiful visual design, but the labels are decorative — unreadable. Fine for Dribbble; useless for client review.

Imagen 4: Decent text rendering, but weak spatial understanding of UI conventions. Buttons overlap, padding is inconsistent.

Winner: GPT-Image-2 in a walk.

Test 4: Product Photography

GPT-Image-2: Strong on non-human product shots. Packaging labels, price tags, and product names render accurately. But any shot involving human skin runs into the "silicone" texture problem — pores too regular, wrinkles too symmetric.

Midjourney V8: Better skin texture and lighting, but text on product labels is unreliable. For lifestyle shots where text doesn't matter, Midjourney looks more natural.

Imagen 4: Solidly mid-tier. Good text accuracy, more natural color reproduction than GPT-Image-2.

Winner: GPT-Image-2 for product shots with text labels. Midjourney V8 for lifestyle shots with people.

Test 5: Multi-Image Consistency (Storyboards)

GPT-Image-2: This is its clear differentiator. A single API call can return up to 8 images that maintain character consistency. Whether you're producing a comic sequence, a product unboxing narrative, or a step-by-step tutorial, no other tool does this. VentureBeat called the manga generation capability "near-perfect".

Midjourney V8: No native multi-image consistency. You can approximate via style and character references, but it requires manual work across multiple generations.

Imagen 4: Some consistency features, but nothing as strong as GPT-Image-2's 8-image batch.

Winner: GPT-Image-2 — this is a unique capability.

Test 6: Iteration & Refinement

This is where GPT-Image-2 falls apart. Multiple community users report obvious "noise texture" emerging after several refinements, with shadows and lighting degrading progressively. After 3+ rounds of edits, quality starts collapsing. The "Conversational Editor" feature, when asked for specific changes, often modifies unrelated elements.

Midjourney V8 handles iterative needs better via its variants and remix features. Imagen 4 is fast enough that regenerating from scratch is usually more efficient than iterating.

Winner: Midjourney V8 for iterative creative workflows.

Real Workflows: How Pros Actually Combine These Tools

The single most important insight from community feedback: the 2026 survey found 70% of freelancers use GPT-Image-2 to "finish" technical work, but go back to Midjourney or Leonardo v15 to "start" creative projects.

This isn't a flaw — it's a workflow. These models serve different cognitive stages of the creative process:

- Explore (Midjourney V8): Generate mood boards, test aesthetic directions, find the visual route. Midjourney's unmatched style control makes it the best ideation tool.

- Produce (GPT-Image-2): Once direction is locked, produce production-ready assets — accurate text, correct dimensions, multi-image consistency.

- Sprint (Imagen 4): When speed is the top priority — rapid prototyping, large batch thumbnail generation, fast concept validation, at 1–3 seconds per image.

- Consolidate (Pixo): The biggest hidden cost of bouncing between those stages is the platform-hopping itself — separate accounts, separate prompt syntax, separate asset libraries. Pixo is an AI Video Agent platform with image models from ByteDance, Google, OpenAI, and xAI, plus video models including Seedance 2, Kling, and Hailuo, all in one place. The same storyboard can pull frames from any image model, then animate them with a video model and preview the assembled shots on a timeline. The community-favorite GPT-Image-2 + Seedance 2 combo is wired up out of the box. Want to take a project from text to video without leaving one tool? Try Pixo free — free credits, no credit card.

Pricing Comparison

| Model | Per-image cost | Best pro plan | Annual cost (est.) |

|---|---|---|---|

| GPT-Image-2 | ~$0.10–0.21 | ChatGPT Plus ($20/mo) or API | $240 + API |

| Midjourney V8 | ~$0.05–0.10 | Standard ($30/mo, 15 fast GPU hrs) | $360 |

| Imagen 4 | ~$0.02–0.04 | Google Cloud (with commit discount) | Pay-as-you-go |

GPT-Image-2 has the highest per-image cost, but if you factor in 75% production-ready vs. ~40% for the others, the cost per usable output may actually be the lowest.

Decision Framework: Which Designer Picks Which Model

If you're a marketing designer

First choice: GPT-Image-2. Text accuracy and multi-format output make it the productivity champion. Pair with Midjourney for hero-creative direction exploration. Full marketing scenario field test in this companion article.

If you're a concept artist or illustrator

First choice: Midjourney V8. No equal in aesthetic control. GPT-Image-2 has its uses for technical production work (storyboards, layout) but isn't the right tool for creative exploration.

If you're a UI/UX designer

First choice: GPT-Image-2. Interface rendering precision is its unique strength. Note though — it generates images of mockups, not editable design files. Figma is still your production tool.

If speed or budget is your hard constraint

First choice: Imagen 4. 1–3 seconds per image and ~$0.02–0.04 cost makes it the most efficient choice for high-volume workflows. Text accuracy is good enough for most cases.

Prompt techniques: Want to wring everything out of GPT-Image-2? Our full prompt guide collects 15 field-tested techniques and the layered prompt method.

FAQ

Q: Has GPT-Image-2 made Midjourney obsolete? No. The 2026 freelancer survey shows 70% of pros still prefer Midjourney as their creative starting point. GPT-Image-2 wins on text and production precision. They serve different stages of the workflow.

Q: Is the "silicone skin" problem really that bad? For portraits and lifestyle photography, yes — it's obvious. For product photography, UI mockups, and text-dense design, it's irrelevant. Knowing your use case is the key.

Q: Can carefully written prompts make GPT-Image-2 match Midjourney's style? Partially. You can specify style, but you can't precisely control film type, lens model, or grain texture the way Midjourney lets you. The model has its own aesthetic preferences and leans toward photorealism.

Q: Which model has the best free tier? GPT-Image-2's free tier offers 2–3 images per day, Instant Mode only. Midjourney has no free tier. Imagen 4 has the most generous free quota via Google AI Studio. For trial purposes, Imagen 4 wins on accessibility.

Q: What about FLUX and Stable Diffusion? FLUX 4.0 is the speed and efficiency champion thanks to its decentralized, low-energy architecture. Stable Diffusion offers the most control to developers willing to run local hardware. Neither matches GPT-Image-2 or Midjourney on text rendering quality.

Sources:

- Introducing ChatGPT Images 2.0 — OpenAI Official Blog

- Best AI Image Models 2026: 14 Generators Ranked — TeamDay

- GPT Image 2 vs Imagen 3: Which AI Image Generator Wins — MindStudio

- Did ChatGPT get better than Midjourney in image generation? — Medium

- GPT Image 2 (ChatGPT Images 2.0): Everything That Actually Changed — MindWired AI

- ChatGPT Images 2.0 is better at rendering non-Latin text — Engadget

- gpt-image-2 Review 2026: Real User Feedback & Limits — WeShop

- GPT Images 2.0: What's Actually Better — A2E